Execution on Intel® Xeon Phi™ co-processor

Speedup by parallelization

We tested the speedups on the Intel® Xeon Phi™ with the following code:

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <assert.h>

#include <omp.h>

#include <math.h>

int main(int argc, char *argv[]) {

int numthreads;

int n;

assert(argc == 3 && "args: numthreads n");

sscanf(argv[1], "%d", &numthreads);

sscanf(argv[2], "%d", &n);

printf("Init...\n");

printf("Start (%d threads)...\n", numthreads);

printf("%d test cases\n", n);

int m = 1000000;

double ttime = omp_get_wtime();

int i;

double d = 0;

#pragma offload target(mic:0)

{

#pragma omp parallel for private (i) schedule(static) num_threads(numthreads)

for(i = 0; i < n; ++i) {

for(int j = 0; j < m; ++j) {

d = sin(d) + 0.1 + j;

d = pow(0.2, d)*j;

}

}

}

double time = omp_get_wtime() - ttime;

fprintf(stderr, "%d %d %.6f\n", n, numthreads, time);

printf("time: %.6f s\n", time);

printf("Done d = %.6lf.\n", d);

return 0;

}The code essentially distributes a problem of size $n\cdot m$ among numthreads cores,

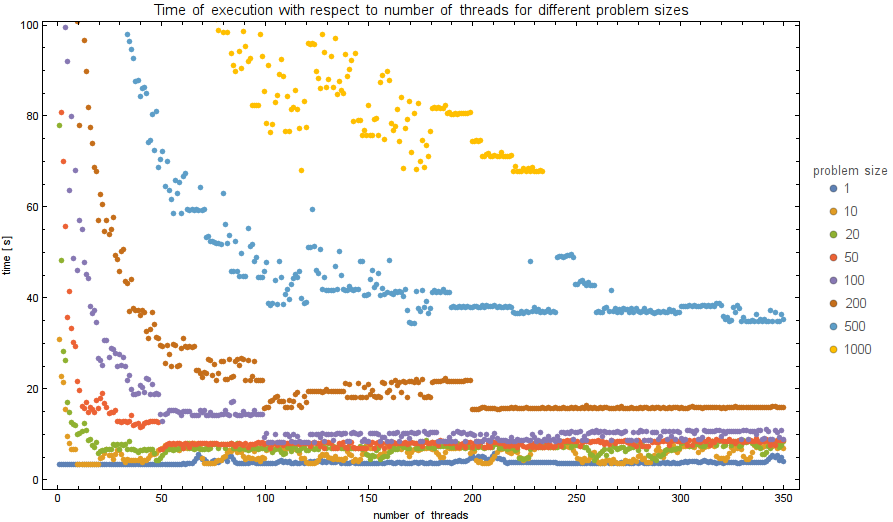

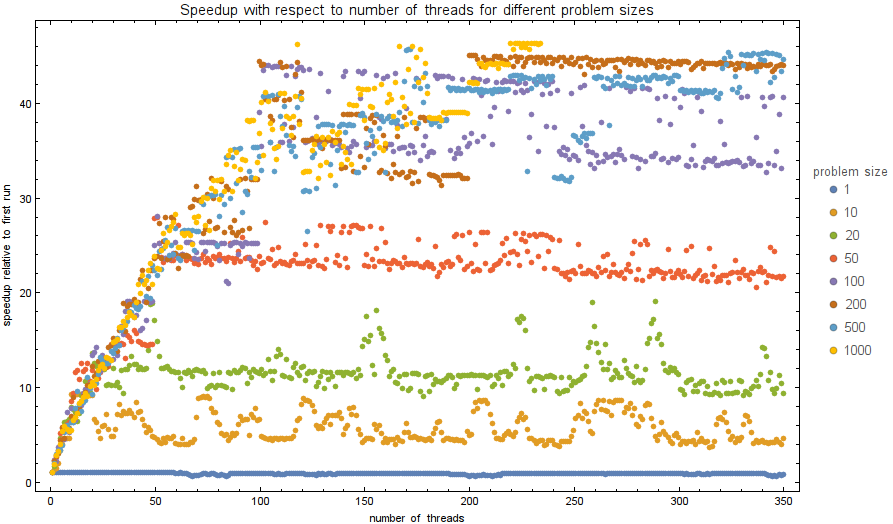

We tested the time of execution for $n$ from the set $\{1, 10, 20, 50, 100, 200, 500, 1000\}$

and numthreads from $1$ to $350$. The plots of exectuion times and performance speeups are shown below.

The code was compiled using:

icc -openmp -O3 -qopt-report=2 -qopt-report-phase=vec -o test test.cpp

without warnings or errors. Then, in order to offload to Intel Phi, user must be logged in as root:

sudo su

To run correctly, intel compiler and runtime variables must be sourced:

source /opt/intel/bin/compilervars.sh intel64

Finally, the code was tested using the following command, where test is the name of the compiled executable:

for n in 1 10 20 50 100 200 500 1000; do for nt in {1..350}; echo $nt $n; ./test $nt $n 2>> speedups.txt; done; done

Speedup by vectorization

Intel Xeon Phi has a 512 bit of space for simultaneous computation, which means it can calculate 8 double (or 16 single) operations at the same time. This is called vectorization and greatly increases code execution.